This is the first in a series of posts where we look at comparison sites across product categories and review their approach and recommend suggestions to make their user offerings stronger.

I discovered Snapsort just under two years ago and found it a refreshing change from the usual technically overdosed sites like Digital Photography Review. Apart from the fact that the pages are clean and simple to use, the best part about Snapsort is that it simplifies the user experience tremendously. It not only lets you compare two cameras against each other, but also assigns a score on a scale of 1 to 100 which makes decision-making as easy as it gets.

Without Snapsort, the purchases I’ve made over the years – the Panasonic Lumix DMC-TZ10 in Oct-2010 and the Nikon D5100 in Jul-2011 – couldn’t have given me the satisfaction of knowing I was hitting the sweet spot in terms of the best functionality afforded by my budget. And for that, I am eternally indebted to the guys at Sortable.

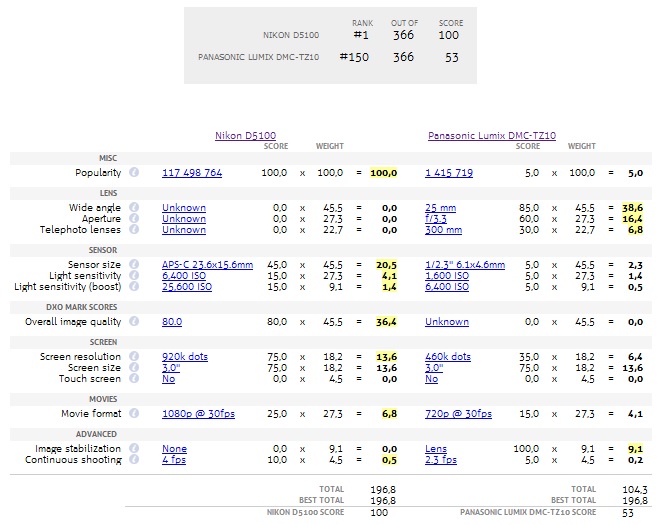

For starters, it assigns a score to each key feature of a camera (depending on that feature’s presence/strength/absence), and along with a certain weightage for every key feature, it creates a Score for each camera. Users can evaluate cameras by looking at their total Score or by drilling down to understand the score for each of the key features. Another good thing that Snapsort does is that while calculating the final Score, it provides you a Relative Score against the best camera in that category. So a camera with a Score of 100/100 isn’t a utopian model of the world’s most perfect-but-impossible-to-build camera, but a real camera that is better than every other camera in its category today.

However, for someone like me, what Snapsort offers is still not enough, as I would prefer to assign my own weightage to the key features, instead of depending on the weightage assigned by Snapsort.

You might wonder why that is – I am but a layman – and I should probably just leave the importance of technical details to the experts. And I would almost agree with you, except for the fact that, for example, I don’t think that a ‘feature’ like Popularity deserves any weightage, let alone a weightage of 4.6%, viz. 20 out of 437.5 points (437.5 points is calculated by adding all the weights assigned to the features here), as it depends largely on the manufacturer’s marketing and distribution strength. Similarly, I would give Screen Resolution a lower weightage than 25/437.5 (5.7%) and give a weightage of zero to Touch Screen instead of 12.5/437.5 (2.8%).

Also, if you were to proceed to compare these two cameras, the output leaves a lot to be desired.

As you would probably know, both these cameras belong to entire different categories (the PLD-TZ10 to Travel Zoom, the N-D5100 to entry-level dSLR), and on some features like lens, are not comparable. However, if you notice, you will find that the Panasonic Lumix DMC-TZ10 is still allotted a Score towards the lens features, even though the Nikon D5100 cannot compete in that category.

If it were up to me, I would segregate the Score allocation between cameras to 3 sets of features –

– Common Features

(where both cameras can compete)

– Camera A Features only

(features where Camera B cannot compete due to ineligibility towards comparison category)

– Camera B Features only

(features where Camera A cannot compete due to ineligibility towards comparison category)

A true comparison would allow for showcasing of these 3 sets of Scores whenever camera comparisons are made. Under the scoring of Camera A Features only, the engine would then proceed to rate features of Camera A against other cameras in its category, and help create a Relative A score to supplement the Common Features Score of A. The same way, Camera B Features would be rated against others in its category to derive a Relative B score, that would supplement the Common Features Score of B. This relative comparison against respective peers of the cameras being compared might not be very useful for the end-user as he/she is primarily looking to compare camera A versus camera B. However, it cannot be emphasized enough that the comparison has to be fair, and by that we mean, for users looking to have an apple-to-apple comparison, only Common Features should be compared and rated against one another.

It is also necessary to state here that there is a difference between a score of 0 due to the absence of a feature, and a score of 0 due to the ineligibility of a feature category (Not Applicable) – and that difference should be clearly stated to help the user understand the reason behind the Score, and to nudge less-informed users towards comparing cameras that belong to the same category.

Needless to say, my purchase decisions were made after comparing cameras within their respective categories, however not before I normalized the features according to my preferences, which meant spending more than a few hours on Excel – first to capture information about different cameras, and then assigning the weightages that worked for me.

This, for me, is the future of decision science comparison engines –

The freedom to let users choose which features are important to them and then show customized comparison results according to each user’s priorities.

I have enough faith in Sortable (seeing what they have done with Snapsort, LensHero, CarSort and Geekaphone) to believe that this is something they are equipped to offer and would require minimal time for them to introduce.

The real question is – Would you like to use a feature like this if it existed?

Also, while we are on the topic of comparison sites for cameras, here’s another suggestion that is open for implementation –

The transition away from technical specs to the user expectation and experience

Let’s face it, most people who purchase a camera, are never going to delve into the nitty-gritty of what their cameras can do or spend 10,000 hours trying to master the learning curve. Most people want to buy a camera to take good snaps, and even though they might not be sure how good they can be as photographers, most of them would like to believe they can be as good as the camera lets them be.

Which is why I’m surprised that I haven’t come across a site that showcases images taken across different cameras as an integral part of the comparison process. Users should be able to browse through collections of images, segregated across different camera models, to let them understand how good (or bad) the camera is. Currently, the user experience I’m imagining is something along the lines of the Pinterest feed.

A user looking to buy a Nikon D5100 should be able to browse through his feed to view photos that have been taken using the Nikon D5100. Same goes for every camera. This will not only allow the user to experience first-hand the quality of images that can be taken, but also set (exceed, or temper) expectations that users might have from their new cameras.

While browsing, users could also choose to tweak their feed by –

– choosing which categories of photos they want to view (the usual landscapes, portraits, bokeh, action, low light, fish eye, tilt-shift, etc), and/or

– choosing which camera manufacturers they want to view, and/or

– deciding their spend budget (on cameras, lenses, etc)

Once a user has gone through photographs, and narrowed down his choice to which camera he wants to buy, the advertising links on the website should point him out to the cheapest purchase option available out there.

Needless to say, whether these photo uploads should be with or without any post-processing is a matter of debate. There are a lot of people out there who believe that basic post-processing is absolutely required, and although I might agree with them, it might not serve the purpose that is behind this solution, which is to showcase to prospective buyers what their new cameras are capable of, preferably right-out-of-the-box. However, even if we choose to allow only untouched straight-from-the-camera pictures, I’m not sure if there is a way to prevent users from uploading post-processed images – as far as I know, post-processing doesn’t change the Exif details on an image.

The reason why I think this idea is definitely worth a shot is because a site like this can easily take off with active community support, by not only creating a sense of competition between users of different cameras (my Nikon is better than your Canon) but also between users of the same camera (wow, I must really learn how to make my camera do that!)

However, till the time this vision becomes a reality (and trust me, some day it will), I guess most of us will have to stick to the likes of Snapsort, which is still, by far, the best (read only) camera comparison site that can cater to newbies and geeks both.

After reading your blog post, I browsed your website a bit and noticed you aren’t ranking nearly as well in Google as you could be. I possess a handful of blogs myself, and I think you should take a look at Speedrankseo, just google it. You’ll find it’s a very lovely help that can give your site free backlinks, traffics or article to improve your ranking. Keep up the quality posts!